A cloud native architecture for SAP NetWeaver

“You may say I’m a dreamer

But I’m not the only one

I hope some day you’ll join us

And the world will be as one”

Imagine – John Lennon

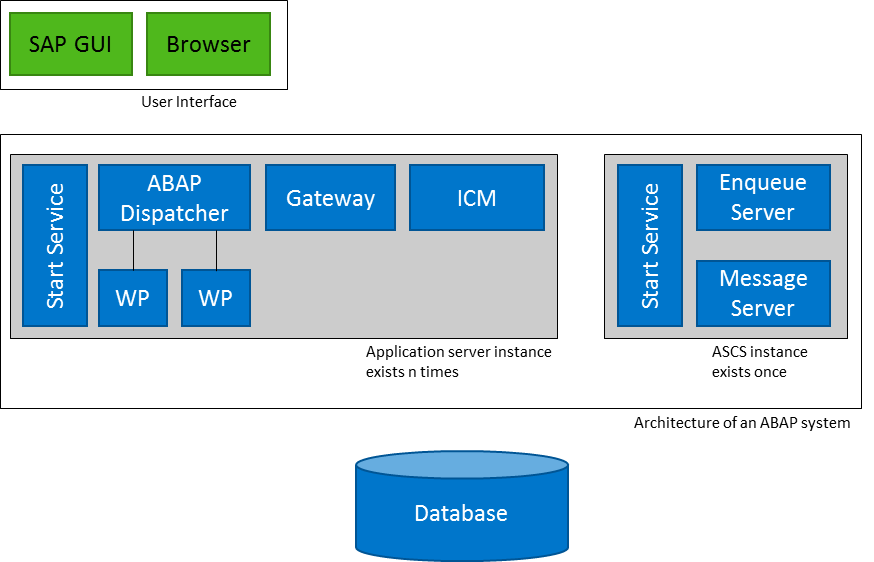

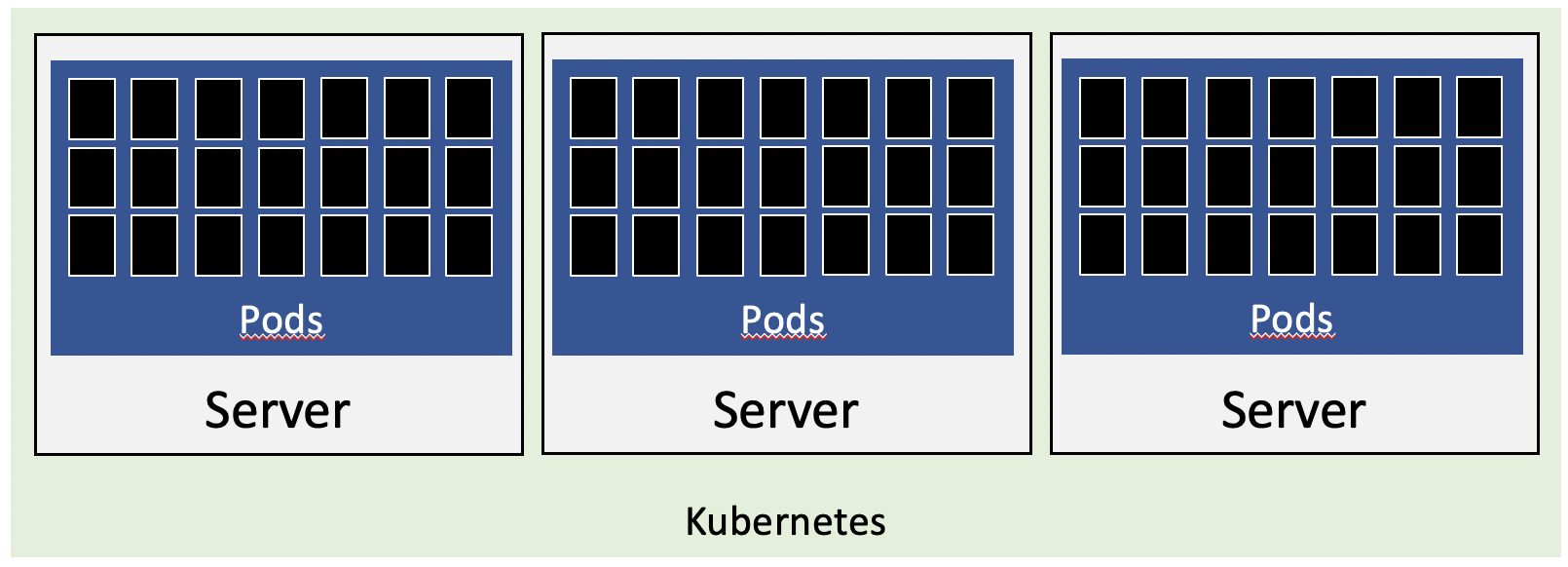

The underlying platform for SAP’s ERP solutions is SAP NetWeaver ABAP. Independently whether it is on-premises or in the cloud; public or private or SAP ERP 6.0, S/4HANA or BW. The base architecture of a NW ABAP system consists for a central instance server (ASCS) and several dialog instances (DIA). Additional services are running on these and most importantly, the work processes (WP). This architecture is not new and already quite old (25+ years).

Image 1: SAP NetWeaver ABAP architecture

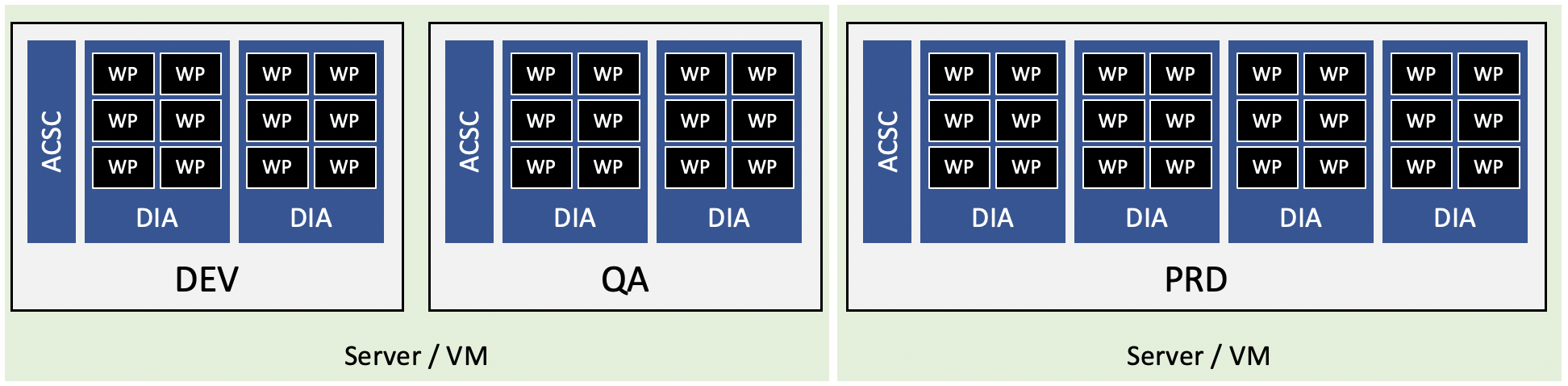

The WP are an important component. Here the actual work is done as user is assigned to a WP to run SAP programs. If no WP is available, it means a user cannot work with SAP. In a typical configuration, you assign X WP to a DIA and Y DIA to an SAP System. The number of WP you can assign to a DIA is mainly – but not only – limited by the available resources like RAM. One implication of this is: if you need to have many WPs, you have to install additional DIA to be able to run more WP. Simplified, in case your limit is 200 WP per DIA, and you need 1.000 WP, you have to run 5 DIA. With this comes all the work of adding these DIA to the cluster. To ensure users work with a properly sized system, SAP invented SAPS and many Basis consultants were very busy in transforming sizing into an art.

Image 2: Work Processes

The overall architecture may be old yet shows that it was done with scalability in mind. The option to have WP to run the actual workload and be able to distribute these across DIA that “just” serve as a process starter is more future proof than it looks at first sight. However, this architecture currently is a big footprint in SAP landscapes. It is not as easy as it looks to scale an SAP system. For peak loads, you have to bring a new DIA into the cluster. Afterwards, you have to remove the DIA again. And this just to add a few WP so the end user can work even when the system is under peak load.

This adds unnecessary costs and administration overhead. What is missing to make NW architecture cloud aware or even cloud native? How to bring this to Kubernetes (K8s)? And why?

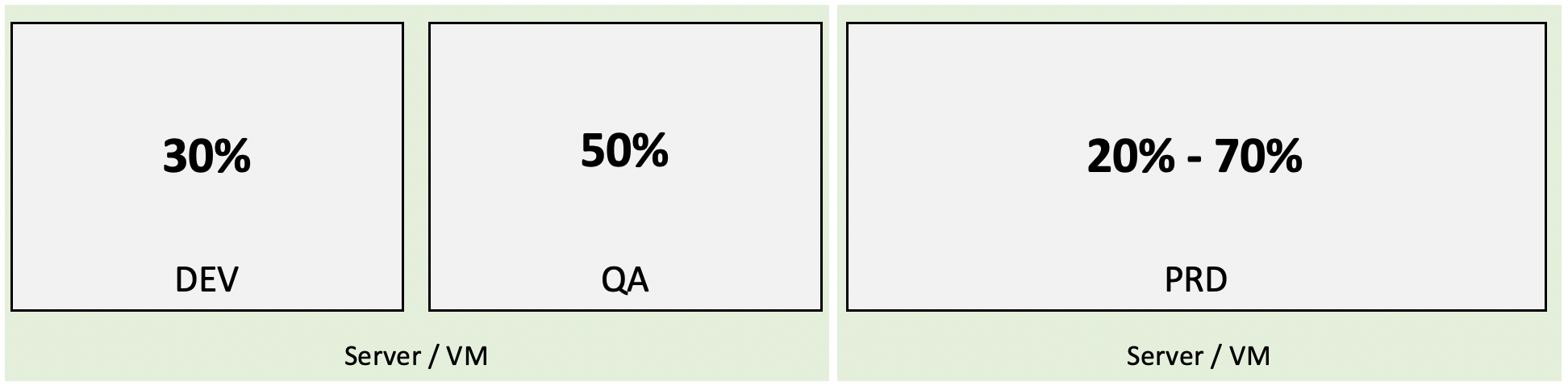

Because we have to

An SAP System landscape consist of at least DEV, QA and PRD, many times with a sandbox, testing, training system and to make it more complex, with a project and stable lane. To make it cost efficient, a lot of these systems run inside a VM, which is running on some powerful shared hardware. And still: for most of its time, resources are wasted. A test system with one ACSC and 2 DIA is running 24/7, while at the same times only receives enough load to occupy 80% of its resources maybe only for a few hours a week. Tearing the system down and starting it when needed sounds like a good solution. In reality you will have to deal with a method to start the system up on time and ensure that it is working. Most times, it is easier and cost efficiently to just let the system running. The NW components are virtualized, but the configuration is not. A certain amount of DIA and WP is configured and when the system is up and running, a fix number of resources is allocated.

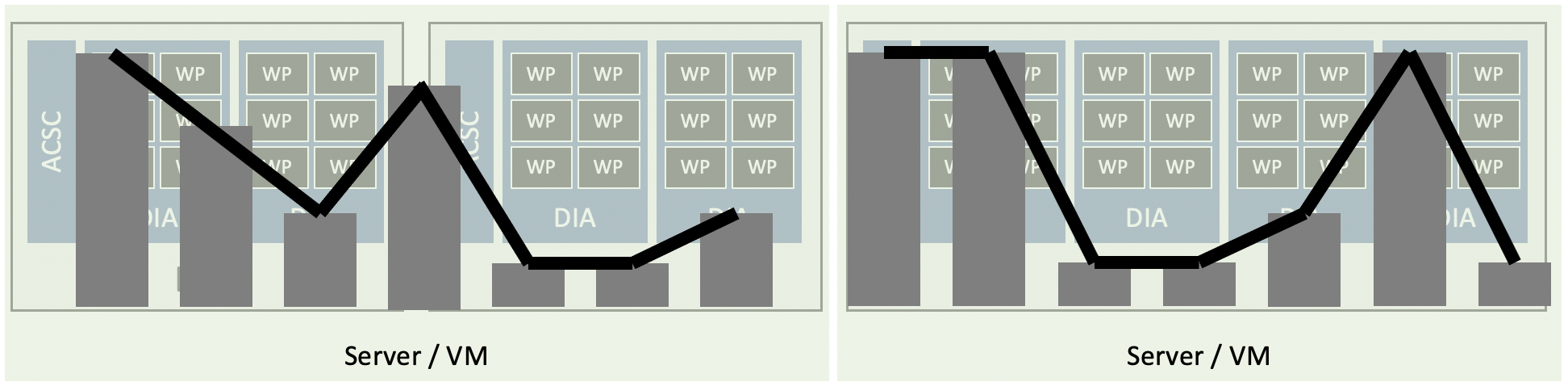

How efficiently are these allocated resources used? It depends on the usage pattern, system type, or processes executed. The resources are not used 100% all the time by each system. Peaks and lows are normal. People go out for lunch, stop working on a weekend, at night, are stuck in meetings, etc. The server running the VMs cannot fully dynamically assign free resources. If DEV is idle, CPU is available, nevertheless it still occupies its reserved RAM. Usage gaps occur.

Looking at a given time frame (day, week or month) you’ll see that the average resource consumption is way lower than the actual configuration. Your DEV system may only use 30% of the total assigned resources, PRD may oscillate in a wide range of 20% to 70%. At some point a developer is going home and logged out. In theory the VM host running DEV and QA has overall enough resources left to run the TEST system, but cannot, as the configuration for DEV and QA is consuming too many resources.

Cloud

Having the system running and having it’s sizing configured is for the worst-case scenario adds costs. You just want to be prepared for the moment when 30+ developers are starting to develop apps, or when a business process needs more resources because of a special sales promotion. This also means that companies pay money for resources they do not need: be it on premise or in the cloud. Running your SAP landscape in the cloud makes total sense. It allows you to save costs compared to on-premises. Unfortunately, not automatically. You still have to run your landscapes in an optimized way. Current offerings are IaaS for SAP on hyperscalers. Add a new DIA can be done without waiting for a server to be ready, and decommission it means you don’t have to still pay for the server being available in the data center. Yet to really save money you have to ensure any non-productive system is not running 24/7 in the cloud. Which gives you the same problem as with your on-premises system. Resources are currently wasted as the SAP system is sized not for the normal usage scenario, but for the edge cases. Even when you size it for the expected normal usage scenario, you are still either wasting resources when the actual usage is lower or get into trouble when usage is increasing.

Cloud native NetWeaver architecture

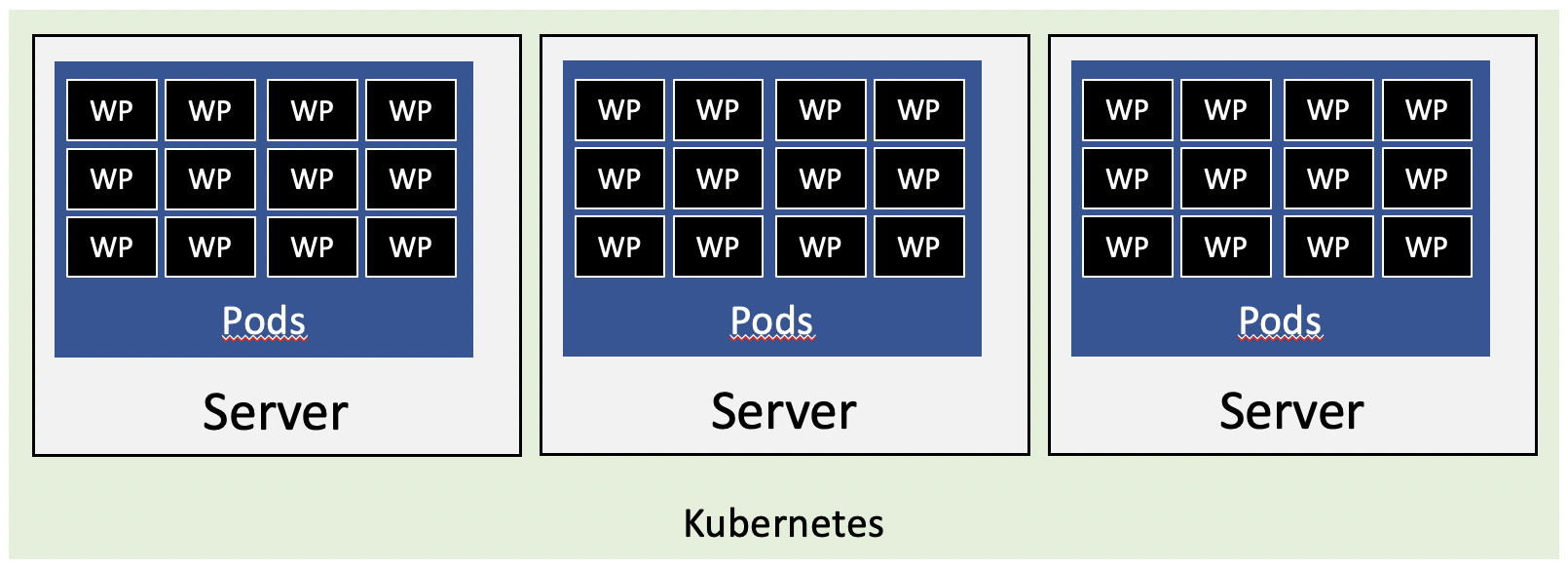

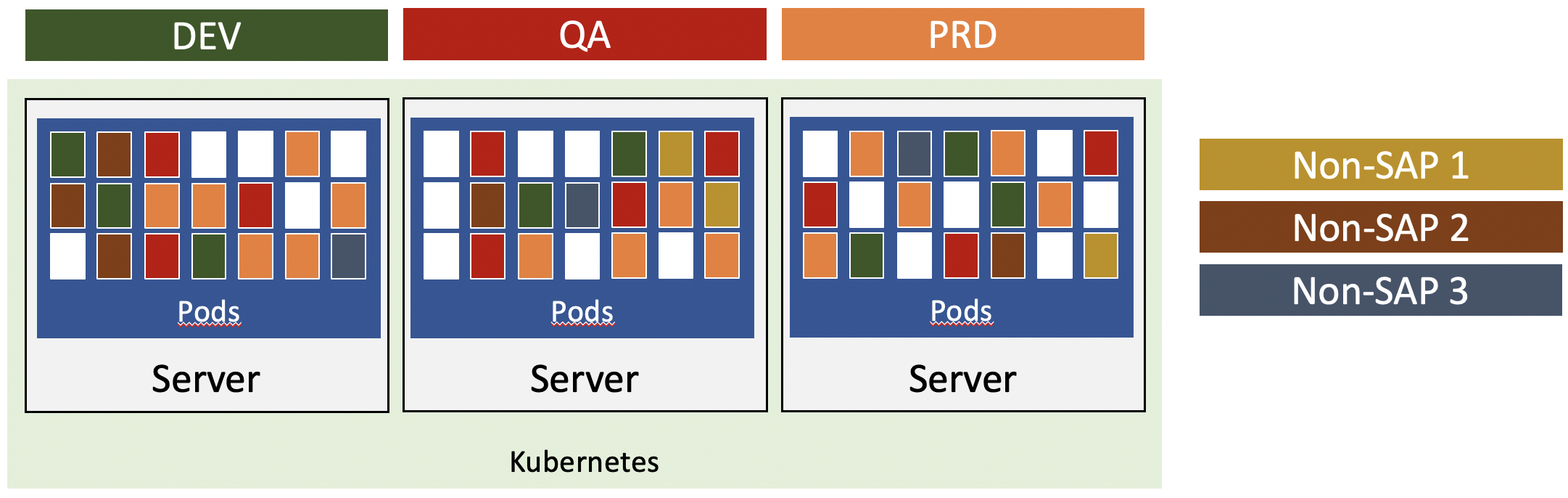

With cloud IaaS is only partially a solution to run SAP landscapes and workloads efficiently in the cloud, what could be a better alternative? To benefit from cloud, you need cloud native apps. For SAP it means you need a cloud native NetWeaver architecture. One of the options to run cloud native apps is Kubernetes (K8s). With K8s, you have a cluster spanning several servers, and these run your containerized app in a pod.

Using K8s as a starting point, how can this be used for NW?

As a WP is the smallest unit, this is the containerized app. It needs to be deployed in such a way that it can talk to other important components like ACSC, ICM or Gateway. K8s takes care of communication between these components. ACSC could be deployed independently or in K8s too. For iCM: regrettably, ICM is SAP proprietary. I asked years ago to offer the ICM functionality as Apache or Nginx plugins. Well, my request was declined. This would help tremendous as K8s uses Nginx as a web server for incoming requests.

Seeing WP as pods allows them to be started and stopped as needed. The K8s cluster controls the WP lifecycle. Not the sizing someone did maybe years ago. The number is highly dynamic, as pods get started when needed and killed after they are not used any longer. As for K8s, it does not know if the pod is part of DEV, QA or PRD. It starts it when needed.

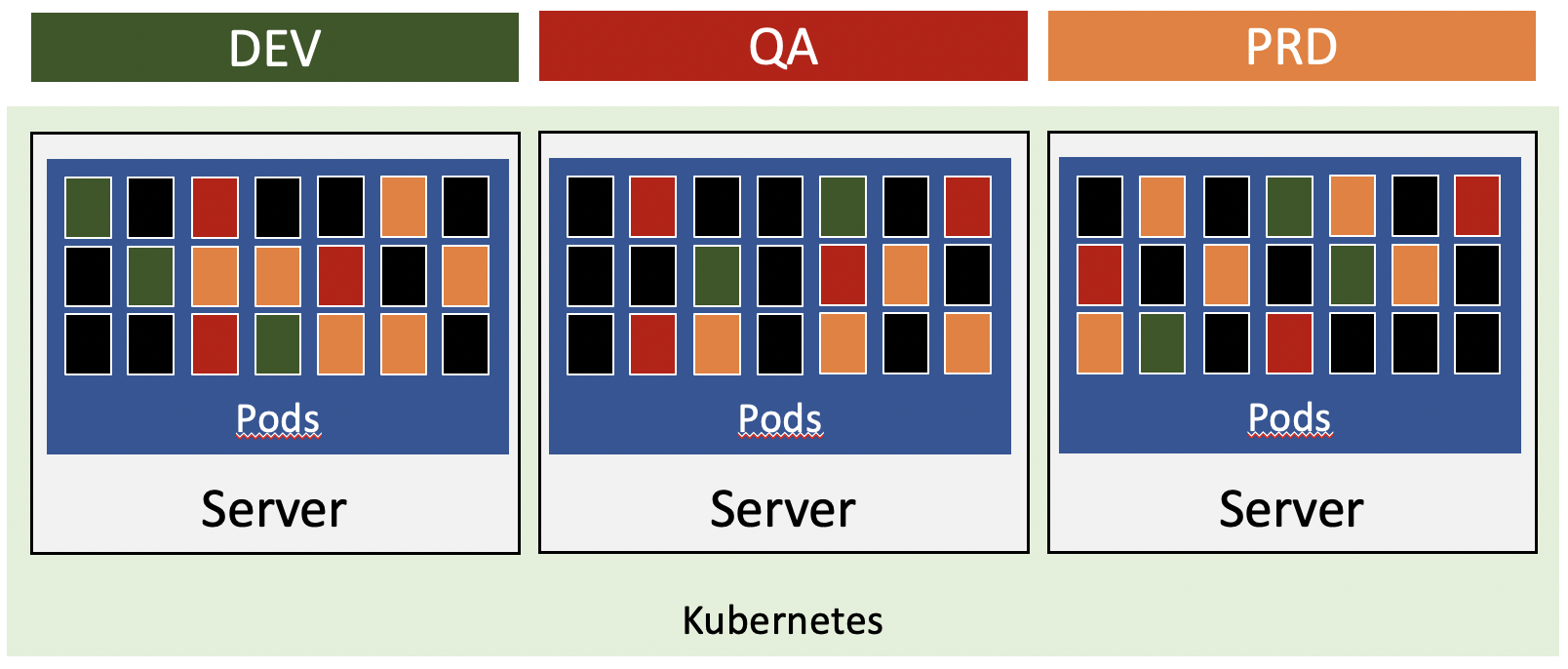

No more non-productive servers

Looking at the DEV, QA and PRD example, this means that they are deployed on the same K8s cluster. Your DEV, QA and PRD systems will occupy the resources needed, not the ones it was once configured for. K8s takes care of ensuring the WP are started as needed and distributed across the cluster. K8s takes care of this and as long as there are enough resources assigned to the overall cluster, you do not have to worry about actual usage and sizing. The landscape will scale, automatically. It’s the landscape that will scale and not just one system. They are using shared resources. This will make it very efficiently running such a solution: once for resources, as well as for the costs.

Hybrid

Pods are not bound to run a specific container. They can run any app that is in a container. Your K8s cluster therefore is not bound to just run SAP. You can deploy an application stack to K8s and it will take care of assigning dynamically available resources across the K8s cluster. A Java or Node.js app may be deployed on the same K8s cluster. They are sharing resources as needed. Solutions that share the same SLA can run in the same cluster.

Breaking NW down into small units has the potential to reduce costs associated with SAP landscapes drastically. The servers running the pods don’t have to be super big. They just need to have enough dimension to run pods efficiently. If the actual load goes down, pods are running on a few servers in the cluster, and the others are shut down dynamically. Sharing resources on a K8s cluster with SAP and non-SAP workloads will result in a shift of perception: it’s not SAP and non-SAP, its one. Investments by customers into K8s will benefit from this kind of architecture. Instead of running and supporting different kind of stacks like K8s and IaaS, they can focus mainly on one stack. Setting up a new landscape could be as easy as “just” having to deploy a helm chart.

Outlook

What makes this architecture proposal so interesting is the fact that it works for on-premises and cloud deployments. And not just for SAP or customers. It works for both in all scenarios. A customer can cost efficiently host their own SAP systems on their K8s cluster, together with other non-SAP apps. On-premises, in their private cloud or on a hyperscaler. Using a helm chart to deploy an SAP system on K8s gives the option to easily run it on another hyperscaler. DEV and QA may run on a hyperscaler, while PRD is on your on-premises K8s cluster. How you deploy an SAP system won’t change, only the location does.

This is now not groundbreaking new information. If you have a little K8s and SAP NW knowledge you can see this potential easily. The question is: is SAP is going to provide an update of the NW architecture and include a cloud native option? If so, is it just for their NW cloud offering or will it be available to all? Honestly, I cannot see us running an S/4HANA system in 2035 the same way we do today. We need a change in architecture. And we need it fast.

1 Comment

Edgar Martinez · February 27, 2021 at 14:12

Hi Tobias,

I think this would be a very useful approach to better handle resources and scale the SAP systems.

One question for the proposed architecture would be, could the rest of the pods be used for something else while not active?

I’m thinking about giving the user the option (or even provide) some sort of auxiliary worker process for a different task or maybe a convenient application, something that can produce an output but that could be shot down in case the SAP system required the computing power.

Maybe if something like that could be possible it could be an extra incentive for the users (both from computing capacity and financially) and could be an extra reason to move the SAP systems to a Cloud Native Architecture.