Enhance your AI with RAG

Make your AI understand your reality by adding content via RAG. The problem is adding LLM friendly content from websites.

Read More

Where documentation meets reality

Make your AI understand your reality by adding content via RAG. The problem is adding LLM friendly content from websites.

Read More

MCP is seen as a problem solving tool for SAP development. However, what SAP's MCP server do shows that AI is going to be big problem for customers.

Read More

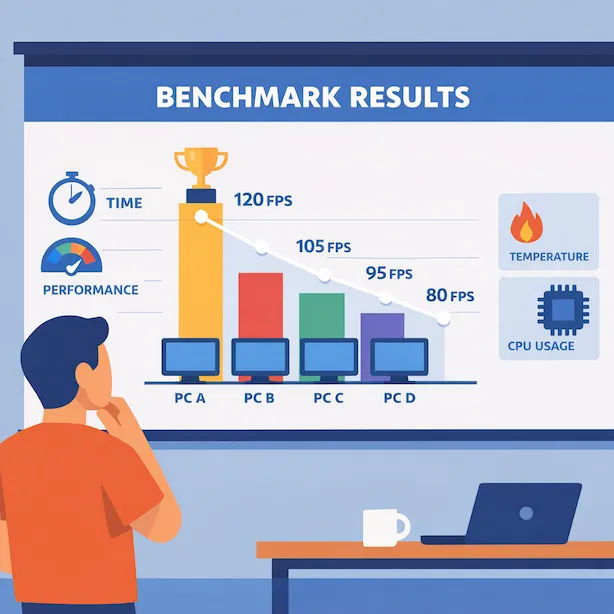

You can chose from a wide range of LLMs for coding. But how well do this perform for SAP coding? What about a benchmark for SAP coding specific LLMs?

Read More

Exception documentation for SAP solutions does exist. It would be good for the developer capability to have this everywhere.

Read More

SAP's marketing is all about cloud, BTP, AI and for developers: CAP. Things you do not find easily at custoemrs.

Read More

CVA is SAP's recommended too for scanning ABAP coding for security vulnerabilities. Yet, somehow, SAP reports security issues with an elevated CVSS score.

Read More